By Pau Aleikum, edited by Jaya Bonelli

This article was written after reading Atomless’s work about the idea of stopping calling today’s systems “artificial intelligence” and instead calling them Predictive Capita.

Generative AI systems are built to predict. They look at very large archives of past behavior and past expression, compress them into numerical form, and then, when asked, produce the most likely continuation. That continuation might be a sentence, a line of code, a style of image, a melody, or a suggested decision. The business model is to sell these likely continuations in many settings, search, chat, advertising, content production, software development, customer service, because reducing uncertainty is invaluable. The emphasis is not on understanding or insight; it is on automation of prediction at scale. History, in this design, becomes feedstock for forecast.

This framing links cleanly to Marx’s idea of dead labour: work done in the past that hardens into tools and routines, then pushes back to haunt living workers. This is exactly what predictive systems do. To build their datasets - they take in yesterday’s articles, posts, code, drawings, videos, and recordings, often gathered without explicit consent (read more about it here, here, here, and here), and fold them into a model that can imitate their patterns. The value offered to those who might buy and use these predictive systems is speed and apparent fluency (and with that, savings on long-term costs), but the cost is shifted onto those whose work was scraped, to the energy and water that keep the servers cool, and to the workers who label, rate, and correct outputs so the model looks polished. This is pure necrosploitation, which designates the extracting of value from the work of the living and the dead while treating that work as if it were ownerless.

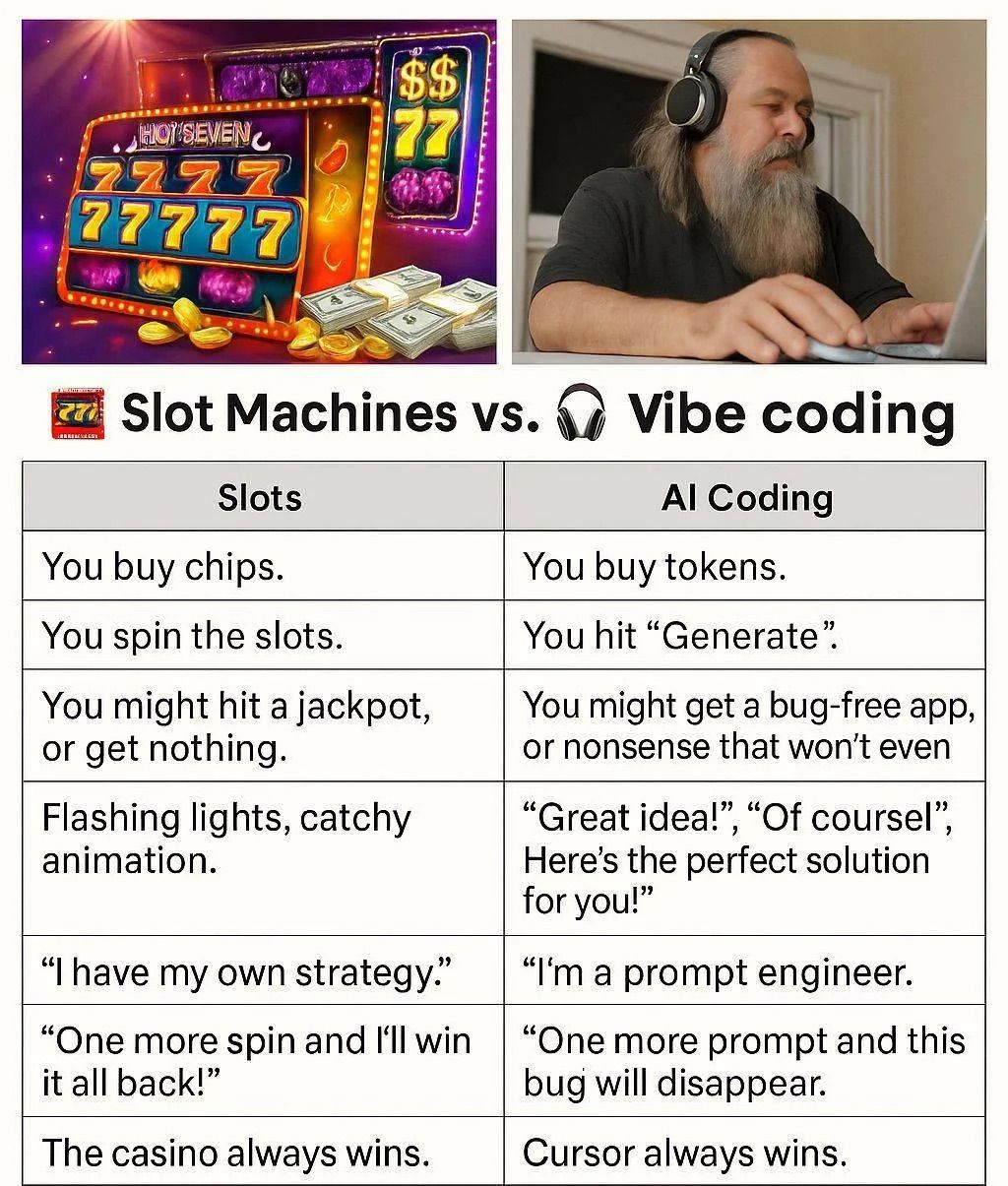

The marketing line of such systems says that they “opens creativity to everyone”, but it really just opens a new path for accumulation: more people can produce outputs quickly, and they don’t all need to be good: there are just more of them. A big consequence of this is that, in many areas, necessary friction - all the small resistances through which we learn and decide - has dissolved. Take “vibe coding” as a clear example: friction here means reading code before we run it, editing a paragraph until the claim is right, debugging a failing test, negotiating a draft with a colleague. But now, all you need to do is describe the rough goal of your application in the chat, copy the generated code and run it, paste the error into the same chat, accept the suggested changes, ship - and you’re done. The appeal is obvious and I myself have experienced it. You can get a live working website in an afternoon - a particularly useful feature if, for example, dealing with urgent matters and needing a webpage to go out ASAP with information and resources.

Without doubt, some friction is truly a waste and we would do better without. But when friction removal becomes the rule, trade-offs appear. People skip the parts of the work where understanding is formed. The result - and the process - both feel smooth and quick, but the skill surface erodes.. This is not just true with coding - take writing: after a few days of auto-complete, it becomes harder to start a sentence without a gray shadow offering to finish it. After a few weeks of one-click edits, the time needed for a deliberate edit feels like a nuisance rather than a step that produces clarity.

The move is not only to help us act faster; it is to act in our place. Social media platforms learned to grab and keep our attention by logging what we looked at and then showing more of that. They curated content for us - but they didn’t necessarily create it. Now, the new systems try to simulate both sides of the interaction. With every interaction, they collect traces of your work (keystrokes, cursor heatmaps, partial drafts…) - and then use those to train the next version. This enables these systems to learn not only what you will click on; but especially: how you will decide. The system becomes the main “doer”: it suggests first, we rubber-stamp, losing part of our agency in the process.

The longer arc has been described by several thinkers. It starts with Marx: first we lose hold of the thing we make, then the way we make it, then the relation with those we make it with, and finally the sense that choosing and meaning are ours. Mark Fisher describes the way culture loses the feeling that other and alternative futures are possible; old styles are revived and remixed while new structures feel out of reach. Franco Berardi describes the squeeze on bodies and minds when signs, metrics, and screens set the pace for everything else. Read together, they sketch a clear endpoint: we accept a shift into content that we are alienated from, because it’s all plausible: everything looks and sounds like things we’ve seen before. The deck is fine because it looks like last quarter’s, the sentence is fine because it looks like it could belong in the previous report: but as Rachel Barr warns: “environments built for metrics eventually become uninhabitable to the people inside them”.

This is a consequence of information overload. What used to be a side effect of the network, is now a mandate. Content is produced at a staggering rate - a lot of it, by machines iterating over other machines. It makes us feel as if the failure to keep up is a private flaw, like a kind of informational FOMO: “I should know this”. The solution is painted to be more automation - let a model do the tedious stuff, and you’ll have time for the rest. But as we outsource more and more, directly shrinks our individual trust in our ability to evaluate claims on our own. This is infoxication at its finest: a steady paralysis that invites us to let the machine be our filter for nearly everything, because we don’t trust ourselves to be as good as they are.

------------------------------------------------

------------------------------------------------

But it’s too late to be pessimistic…

Now, it is reasonable - and necessary - to ask what can be done that is practical and not only rhetorical. Here are three key pathways we try to maintain.

- A first answer is to keep certain kinds of friction on purpose. Friction here isn’t pain; it’s the small steps where skill and judgment grow. And because this topic can tilt toward slogans, here are some concrete recommendations:

E.g:

Using ChatGPT to draft an email or draft it yourself, and ask ChatGPT tweak out the grammar

For image generation: start with a quick sketch or two reference images before you prompt to let your imagination run wild, cap yourself at a few generations, and write one sentence about what the picture needs to say

If you are a developer, use a code assistant to speed rote tasks, but make reading and testing non-negotiable, and block generated code from entering parts of the system where failure is costly

------------------------------------------------

2. A second answer is to keep relationships in view and in check. Studios, workshops, reading groups, labs, and classrooms only stay healthy if some of their activity is off the productivity (?) meter. These are places where people learn from each other and pass on standards that no model can infer on its own.

E.g.:

If you use work or ideas from someone, cite or pay them..

When you work on a project, make it clear whether and how model assistance was used.

Protect time for work that is not constantly instrumented.

In a classroom setting, let students experiment with generators, then require them to annotate what they kept, what they cut, and why.

------------------------------------------------

3. A third answer is technical and civic. Many hope that open source will pull these systems out of the hands of a few firms. Open code helps, but it’s not all. . We should also think of governance, compute, energy, data access… No one element solves the whole problem, but it does put bounds around growth, adding friction and creating handles for action. It also makes it easier for cities, schools, and co-ops to run tools that serve their needs rather than absorbing tools that dictate them.

E.g.:

The idea of neural mortality: models that forget by rule and expire on a schedule, such that they must be retrained, with that retraining governed by those affected.

Scoping: smaller models tuned for clear domains under clear accountability.

------------------------------------------------

There is also a personal dimension that is not merely private. Wendell Berry’s advice to step outside and pay attention to land and season is practical and meaningful, especially in an era that wants everything to be instantaneous and weightless. Walking without a goal, tending a garden, cooking slowly, making something by hand, these are not frivolous lifestyle embellishments. They are ways to keep your sense of time and meaning, and to link it to tangible bodies and places. And if some portion of your day is shaped by things that do not obey those patterns of efficiency and optimisation, you are less likely to fall under the diktat of the predictable and the predicted.This is about leaving room for choice - forming a kind of “cognitive resistance” to what is merely just more likely.

One last question deserves a direct answer: can generative systems be separated from those corporate incentives that push toward maximal capture? In part, yes, if communities are willing to build and fund shared infrastructure. That, for example, can mean cities and co-ops sponsoring domain-specific models that help with care, housing, transit, or local law, with strict limits on data retention and clear paths for redress. It implies standards, benchmarks, physical bodies and unions at the tables where tools are adopted and decided on, so that work is shaped with, not merely to, prediction in mind. It also induces accepting that some things will stay slower and less glossy, because the aim is not a race to make everything instant, but a steady practice of making things fit people that live and evolve.

Predictive systems thrive on sameness, speed, and a steady flow of past into future. The most reliable ways to resist those pulls are simple, specific habits that keep understanding in the loop and keep relations in view. So, do preserve some friction. Keep part of your process off the meter. Choose a few places where you will stay a little unpredictable, a little unaligned, a little hard to model. Those places are not refuges. They are the ground on which meaning can still be made, rather than only predicted.