Most AI image tools act like they can see the whole world at once. You type a short line, and they hand back an image that looks neat and sure of itself. Yet beneath that neatness sits a simple fact: that these tools learn everything they seem to know from piles of photos and drawings that other people posted online. This pool of visual data is anything but neutral. It unequivocally leans towards the places, faces, rooms, and objects that get shared the most. To the point that kitchens look like showrooms, work clothes look freshly pressed, and streets look like travel ads.

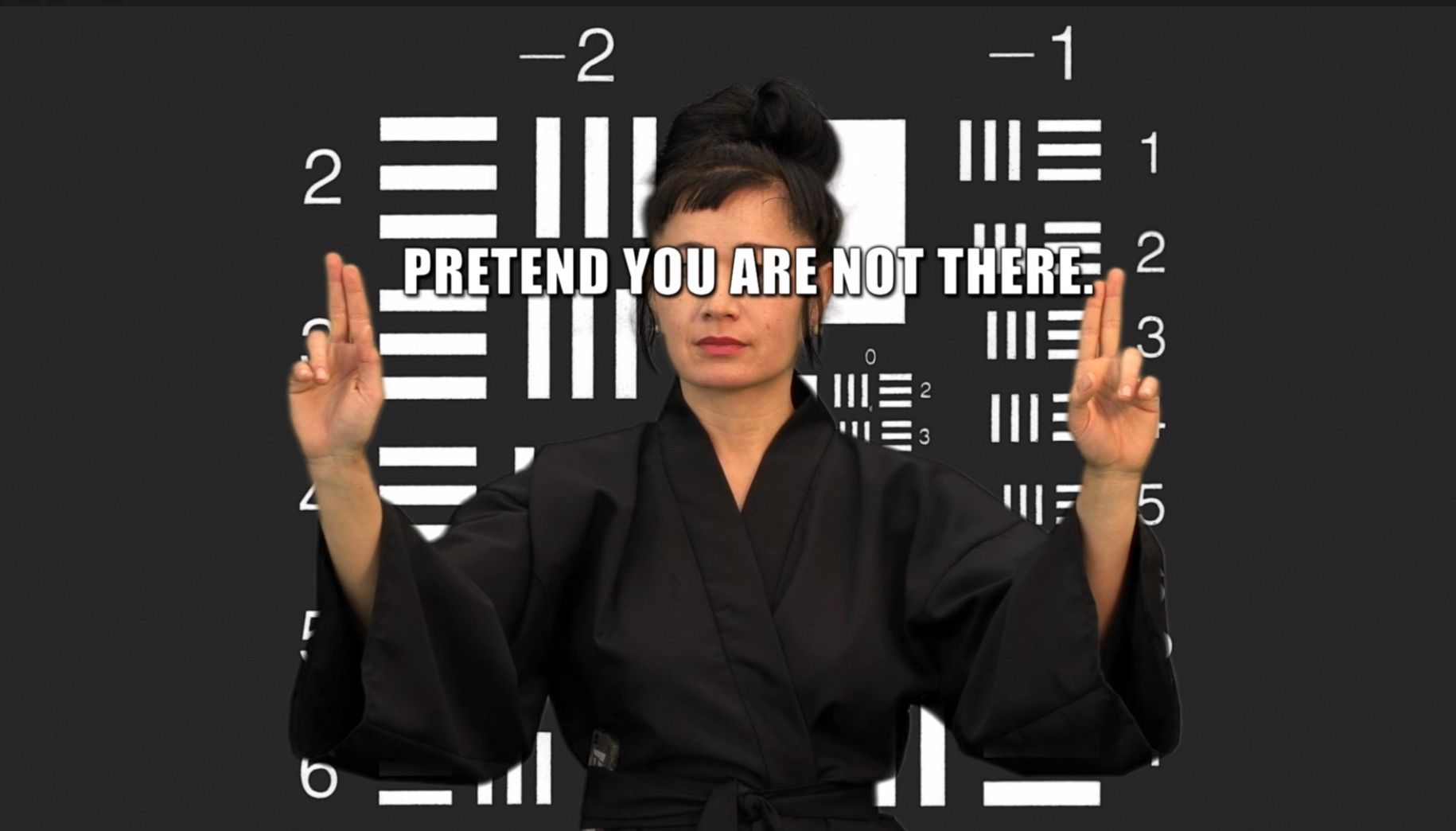

Let’s start with a small but stubborn claim: “more context beats more data”. Yet most text-to-image systems are built on the opposite idea. Feed the machine a near-infinite buffet, smooth out the rough edges with some clever math, and then you can serve a clean and complete “view from everywhere”. Donna Haraway had a phrase for that fantasy long before prompt boxes existed: the “god trick”, a gaze that claims to be “a conquering gaze from nowhere”. To perform the god trick is to claim to have some kind of supreme power of “objectivity”: to be a disembodied, reigning authority of knowledge and representation. The cure she offered still holds: admit the viewpoint you are seeing from, and make that place part of the method - this is what she calls “situated knowledges”.

You can feel why this matters the moment you try to render a memory that is deeply local. As we discussed in this article, we encountered this issue in which someone would describe a memory and remember certain little details - for example, a thin scratch on a kitchen tile where a chair would always catch, especially on Saturday mornings, when the house grew more crowded with visitors. But the image output by the model would have perfect, flawless, shiny flooring. Or, say the person recalled the heavy, dented pot that kept losing its lid. The model would brighten the metal and even out the edges.

At this point, let’s be clear: as mentioned in our previous article, this was not an accident. In fact, it was because the baseline AI model performs exactly as it was trained to. Only that it has been fed such a vast, incommensurable amount of data that any form of subjective, affective nuance has been completely “sanitized” out of the end result. Now, it is very important to highlight that training models with more and more data did not wash away bias; it simply laundered it. Like that supermarket that camouflages illicit activity with suspiciously low prices, the model has come to “dissimulate” its bias under a thick layer of “genericness”. (Generic does not mean unbiased - quite the opposite, what is determined to be “generic” or not, in itself, is a very charged and subjective question, but let’s not get into that now.) Rather, the output is flattened, characterless - which does not mean neutral. Kate Crawford calls AI a “system of extraction” whose livelihood is based not only on extracting minerals and labor, but also ways of seeing, where the push to make everything into data produces a thin, standardized world. The quantity looks impressive, and yet the context has gone missing. No “situatedness”.

Once you accept that the gaze is partial, a second, older claim clicks into place: technologies carry politics. Langdon Winner wrote that artifacts embed forms of order, inherent political properties and arrangements. The default parameters inside an AI-image generator are not simple aesthetic preferences: they are small decisions (about who appears, what they wear, what objects count as “normal”). These default settings are directly informed and drawn from the images that circulate the most. So whatever may be the social pecking order that governs the (extremely not neutral) circulation of images, that order will be inherited by the generated images too - reproducing subtle patterns of power through new elements output into the game.

Surveys of bias in text-to-image systems collectively confirm this pattern. Gender and skin tone have received the most attention from studies and researchers, while geo-cultural bias has been historically less studied, even though it often is at the root of the oddest mismatches. Ask for a girl playing in a square in Portugal in the 1990s and the surrounding space may first drift toward an abstract “European postcard,” or then swing to a rural scene if the subject is described as Black. The prompt might try to pull the scene toward a place, but the model’s learned center of gravity (and false sense of objectivity) will pull it back.

The consequences of this spill outward. Editorials and studies keep warning that generative systems do not only reflect bias, but also amplify it, and that people then adapt to the output as if it were something neutral. Change the skin tone or the dialect in the same prompt, and the model often shifts more than appearance or voice. A “CEO” may move from a glass office in London to a modest room in Lagos. A “teenager hanging out after school” may switch from a leafy suburb to a dense urban block. A “family dinner” may gain or lose signs of wealth. The edit sounds like minor cosmetic changes, but they’re much deeper than that. The model has absorbed patterns from its training data, so it links race and language to place, class, and power. That is the quiet move. It is not just style, it is a chain of learned correlations that reshape the whole scene.

So here’s a different bet. Instead of reaching for a “universal” viewpoint, let’s train and deploy situated models that proudly name their vantage point: disclose the data sources, name the place and the period that they are meant to represent, specify their limitations and what they are bad at. Let users choose the lens, not pretend there is no lens.

In the early days of Synthetic Memories, we tried a small, concrete move in this direction. We re-trained Stable Diffusion with LoRA on four tiny archives: interiors and exteriors from Barcelona between 1920–1940, and again between 1960–1980. Roughly fifty images per slice. The goal was not photorealism. It was texture, posture, light, the way a fireplace or a school desk looked in a very specific city at a very specific time. With LoRA, you freeze the base model and learn small low-rank updates that nudge the network toward a domain. It is cheap, fast, and reversible. Most of all, the change brought by fine-tuning the model was extremely instructive: prompts got shorter because the model already “knew” the grain of the era, clothes fell into place, fireplaces stopped defaulting to a single suburban template, and the gestures felt closer to the period.

Yet it’s also important to say that some limits stayed put. For example, if the base model was vague about certain objects, re-training the model with a LoRA did not redefine the laws of physics, whether for hands and handles. If the base model’s text-image link had weak spots, parameter-efficient tuning did not repair them. In short, the gaze became more local, but the bones of the gaze stayed the same. LoRA is a good wrench, not a new workshop. But from the moment in which you accept those constraints, you can still build better systems by design.

In the process of building our own AI (on top of existing models) as part of our work on Synthetic Memories, we’ve focused on the following four layers of system design, which we’ve found to constitute a helpful framework for thinking and building “situatedness” from the ground up.

- Data and model documentation. - Publish model cards that spell out intended uses, failure modes, and group-wise behavior. Pair them with datasheets for the datasets, including how and why the images were gathered, licensing, gaps you could not fill, and k-nearest examples for common prompts so users can see the visual ancestry. This is not bureaucracy. It is a map of the gaze, a crucial understanding of the land it covers, and its borders.

- Controls that surface perspective - Expose sliders or toggles for place and time. For example, we let the user pick their settings: “Barcelona, interiors, 1960–1980”, and we make that choice visible in the metadata of every output. It’s also important to offer an “evidence tray” - in our case, we preview candidate source neighborhoods in the training set before image generation, not after. Borrow a lesson from academic citations: show your work.

- Participatory validation - With Synthetic Memories, we’re often working to help communities re-form fragile memories. This has taught us the crucial importance of bringing in these groups and individuals into the loop as named reviewers, not as anonymous clicks. Build small test suites of objects, rooms, and gestures that are meaningful in that context, then make them part of the acceptance criteria, like data for validation testing. For example, if we’re reconstructing a memory for clinical reminiscence (i.e. as reminiscence therapy, for individuals suffering from dementia), a “memory generator” should fail a build if it keeps swapping a dented pot for stainless steel - such a “detail” changes the whole picture. This is also where fairness research meets practice. If your model shows fewer women than the ground truth in a set of prompts about scientists, or if it keeps shifting the space when the subject’s identity changes, you can catch and fix it.

Don’t forget the policy layer!- Perhaps this doesn’t happen at the system design-level - it’s a much larger scale area of impact, which doesn’t make it any less crucial. It can mean a lot of things:

- To fund local models the way cities fund archives

- To encourage cultural institutions to publish digitized collections with licenses that allow this kind of bounded training, paired with community governance on use

- To require disclosure of training domains and intended contexts for high-impact imaging systems, much like geographic indications on food.

None of this stops global research, it simply recognizes that good seeing comes with an address. Now, two objections usually appear at this point. One says that small models will “fragment” the visual field. But that’s good! A plural public record beats a single smooth feed, mirroring the diversity of human perspectives and opinions across the population. The other objection says that global models will get so large and so “balanced” that the “local” angle will not matter. So far, the evidence points the other way. With every new baseline, we still see the same tilt: male versus female coded professions when no gender was specified, narrow readings of beauty, and a tendency to place certain bodies in certain spaces. Surveys and audits keep finding and documenting the bias, then the news cycle moves on. And yet, the slope remains, even as the models take on larger contexts and more data.

If the stakes feel abstract, think back to the person describing the kitchen tile. This is not only about art or taste. These images feed search results, hiring pipelines, product tests, and clinical tools. They make decisions that affect us all. When outputs drift toward a narrow center, they do not only misremember the past. They guide what we expect to see next. Studies in other modalities show that, over time, human judgments adapt to the model’s bias, which means the model is not only reflecting a world, it is training one. Now, this happens with language: slowly, but surely, we’ve started to adopt words and phrasing from the LLMs we use every day - why do you think everyone has started using “delve” all the time? It makes sense that it would extend to judgment and opinions.

So, here is a practical north star for anyone building or commissioning text-to-image today. Start with a place and time. Name them. Write the model card before you code. Gather a small, lawful, well-described archive. Invite the people who know that world to set the test images and to provide some of their experiential inputs. Start with parameter-efficient tuning if you must, then graduate to full fine-tuning when you can. Measure not only faces and counts, but also the objects, rooms, and postures that carry class, race, and gender in quiet ways. Publish misses. Treat the gaps as findings, not as marketing problems. There will still be surprises - and there should be! The point is not to freeze one orthodoxy in place. The point is to trade a false view from nowhere for many honest views from somewhere, each one accountable to its sources and its uses. The map is smaller, yes, but it is also thicker, dense with memories, nuances and dimensionality. In practice, that makes the act of seeing kinder - and it gives the dented pot its dent back.