TED has this specific talent, almost a cruel one, for putting on the same stage, in the same week, both people I genuinely admire and people whose work makes my skin crawl. As George Civeris brilliantly put it, it is one of the few spaces where you see both people that are trying to solve some of the most complex and difficult problems in the world, and the people that are causing them.

The first session of TED2026 opened with Adam Bry and Garrett Langley. Bry runs Skydio, the drone company that calls itself “America’s most trusted autonomous drone.” Langley co-founded Flock Safety, a surveillance outfit now sitting in over 5,000 American communities, scanning, and - I wish I were exaggerating - 20 billion vehicle plates a month.

They came to tell us what looked like a very clean story: that drones and cameras are saving kidnapped children, catching criminals before they run, and protecting infrastructure from burning. Langley claimed Flock Safety helps solve about 10% of reported U.S. crimes (Flock Safety, 2025). Bry showed drones flying patrol routes autonomously, spotting threats before anyone could dial 911. The tech is impressive, and I mean it. What didn’t sit right with me was everything around it.

The Whataboutism Olympics

As you can imagine, TED audiences are not generally not the biggest supporters of massive surveillance - so, as a way to defend themselves against potential criticism, both speakers, separately, resorted to the same playbook.

Langley’s version: we all have smartphones in our pockets already, and this is no different.

Bry’s version: we already provide the police with guns and tasers, which are way more dangerous than a camera-equipped drone.

I kept waiting for the punchline. It didn’t come. They actually meant what they said.

The problem is, these aren’t arguments. They’re what logicians and rhetoricians call “tu quoque”, Latin for “you too” which is a polite way of saying the argument consists in changing the subject. A special case of an “ad hominem” attack, “tu quoque” is really a fallacy, because the existence of a worse thing does not make a bad thing acceptable. The fact that I carry an iPhone that knows where I sleep does not mean that I must welcome a government-operated drone grid to hover over my neighborhood. And the fact that cops carry Glocks does not warrant, in any way, the expansion of their digital panopticon.

Now, I get the appeal. A drone finds a missing kid - that’s a real, tangible outcome, a point in its favor. But the claim that this technology is “neutral” because other dangerous things exist too, so in the grand scheme of things, it’s really not that bad? That logic is as old as the arms industry itself. But the worst part is that it’s doing a lot of heavy lifting for people who’d rather not talk about what happens when the context shifts.

Ukraine, and the Slide Nobody Wanted

In another part of the world the same autonomous flight systems and computer vision tech is having a different impact. In Ukraine, drone attacks jumped from 4,525 in 2023 to 19,704 in 2024, roughly a 400 % increase.

Langley brought it up himself. He had to. You can’t stand on a stage in 2026 talking about autonomous drone infrastructure without someone in the audience silently googling casualty statistics. So,here they are. Drones now account for around 80% of all battlefield casualties in the Ukraine-Russia war. Between February 2022 and April 2025, short-range attack drones killed 395 Ukrainian civilians and wounded 2,635 more. By 2025, Ukrainian drone forces alone killed or seriously injured over 240,000 Russian soldiers. These are the same categories of technology, autonomous navigation, object recognition, geofencing, being discussed on the TED stage, just reconfigured.

Bry’s response was essentially: the technology will exist regardless, so we should be the ones building it responsibly. Which is, to be fair, not nothing. But it’s also the founding logic of every weapons program since the crossbow. “Better us than them” has a long and not particularly reassuring track record.

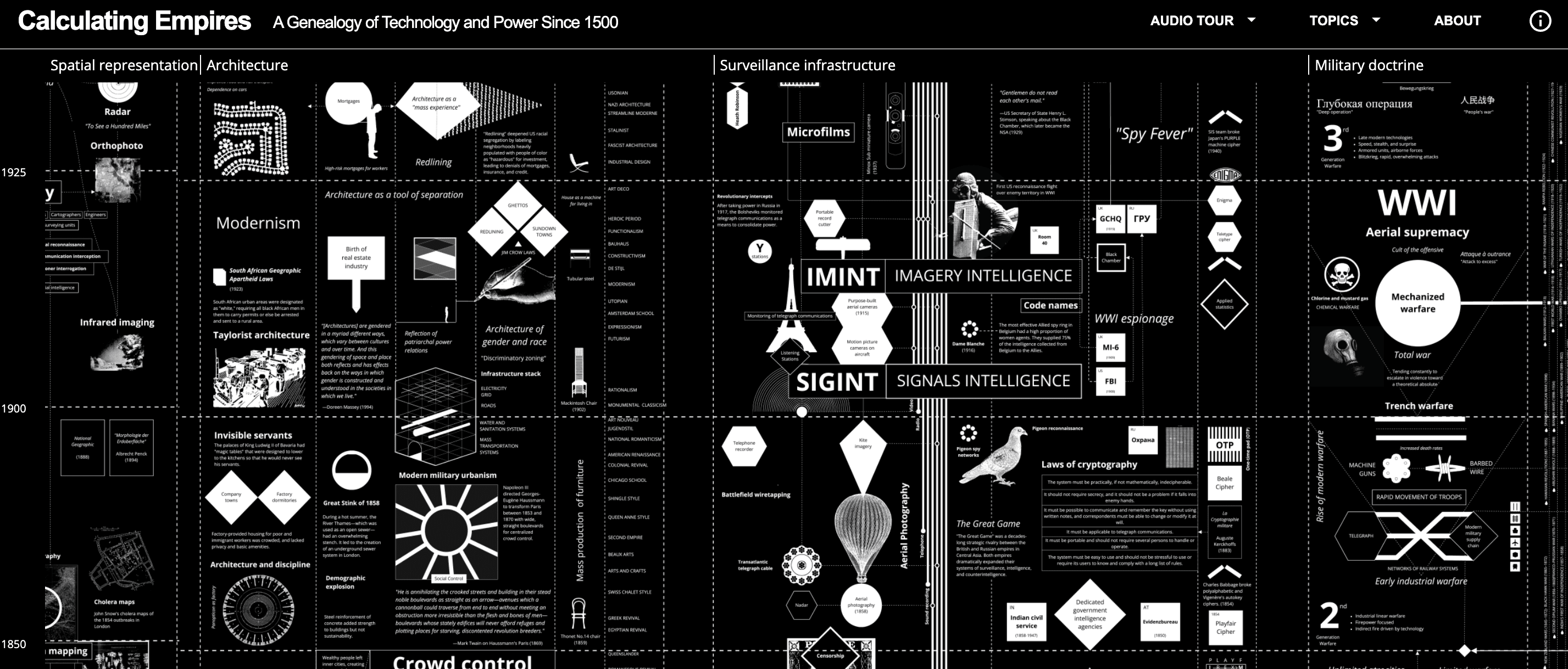

The Infrastructure is inherited

Now, here’s what neither man talked about, and I couldn’t stop thinking about it.

It’s not just that surveillance infrastructure is inherently political. It goes beyond that - often, a “political” dimension (as per the etymology - “pólis” is the “city” in Ancient Greek) might connote a collective, almost public aspect. That couldn’t be further from the truth - the real issue is that surveillance infrastructure precisely always has an owner. And there are all types of owners in the world - and they can change.

Flock Safety built its license plate readers for crime prevention. That’s what they say, and I believe that’s what they believe. But documented reporting shows that roughly 4,000 immigration-related searches were run through Flock’s national network, searches that Flock’s own policy explicitly prohibits. Local police departments, operating under pressure from a federal administration running the most aggressive immigration enforcement campaign in recent U.S. history, used the grid to track undocumented immigrants. In Virginia alone: nearly 3,000 such searches in one 12-month stretch. Denver: over 1,400 cases, with 690 of them coming after Trump’s January 2025 inauguration. In Texas, a school district’s security cameras, part of the Flock network, were searched over 733,000 times in a single month, including 620 immigration-related lookups.

School cameras - used to look for parents. Flock eventually suspended access in California, Illinois, and Virginia - but in June of 2025. Months after it had already happened. The company had built something for public safety, administration changed and with it, shifted the definition of “threat”.

When you build surveillance at scale, you are not building it for this government only. You are building it for every government that follows. For entities that might use it for purposes beyond the ones you imagined. Those lines should probably hang in the boardroom of every public safety tech startup. The fact that they don’t, tells you something about the time horizon considered by the people making these decisions, which is roughly the distance between now and the next funding round.

More Eyes, More Cells, Same Problems

And here’s the part that really gets me, the part where the logic breaks down if you zoom out even slightly.

Both Bry and Langley are trying to make communities safer. I don’t doubt that. But “safer” through surveillance means something very specific. It means catching more people after they do something wrong. And catching more people means more arrests. And herein is set off the vicious cycle of the prison system, worsened when bringing in factors of race and poverty.

This isn’t just speculation. When Chicago deployed its Strategic Subjects List, a predictive policing algorithm, researchers found no reduction in gun violence victimization, but they did find more arrests. Studies on Automated License Plate Readers show limited evidence of crime reduction despite vastly expanded data collection. It’s not that, thanks to these kinds of surveillance technologies, people stopped committing crimes. It’s just that we got better at catching people. But we did not get better at producing fewer people who needed catching.

Criminologists have a term for this: net-widening. You build a finer mesh, you catch smaller fish. Electronic monitoring, surveillance cameras, license plate readers - they expand the system’s reach into communities that were already over-policed and under-resourced. Recidivism data backs this up: 60% of released prisoners are rearrested within two years. Incarceration itself does not reduce crime. It cycles and churns people through.

So, the question I wanted to ask from my seat was this: If your goal is really to have fewer crimes, shouldn’t you be focusing on that? More prisoners are not a benchmark of less crime, so it’s not something to aim for.

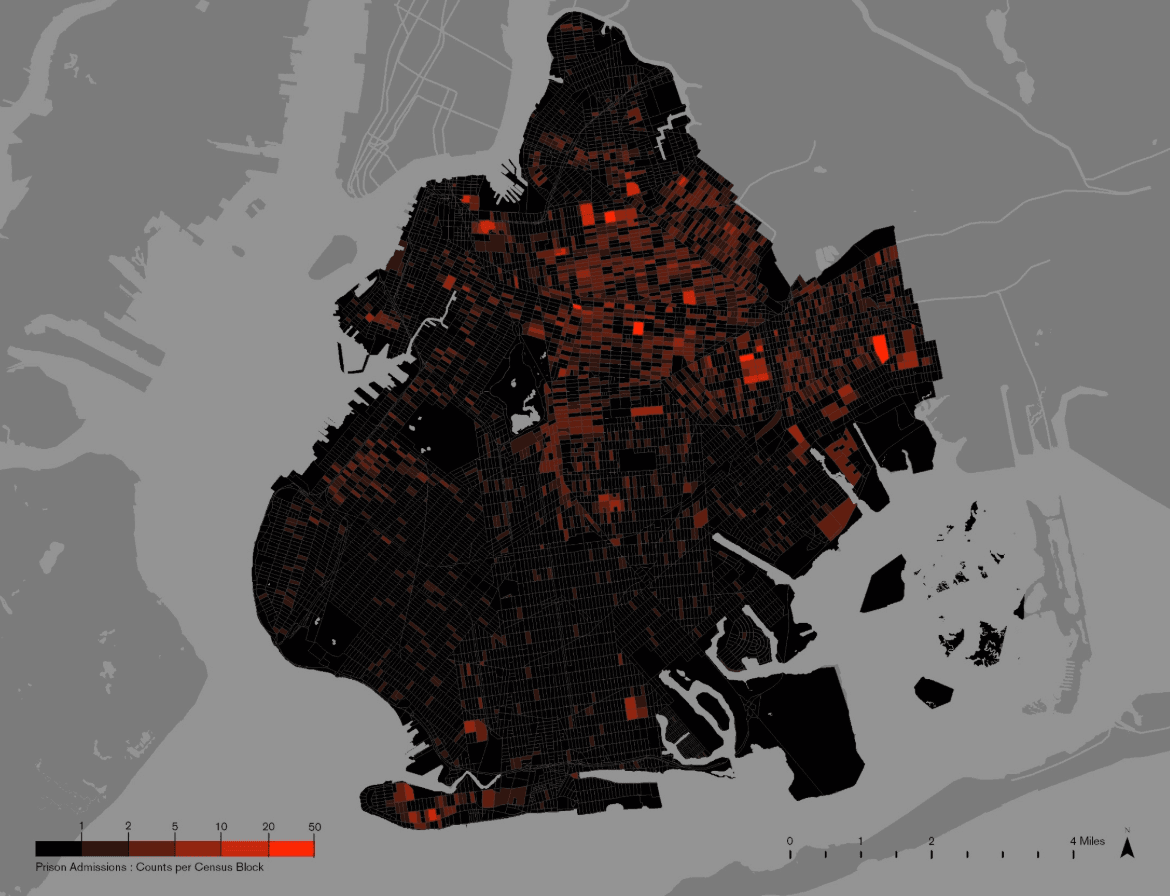

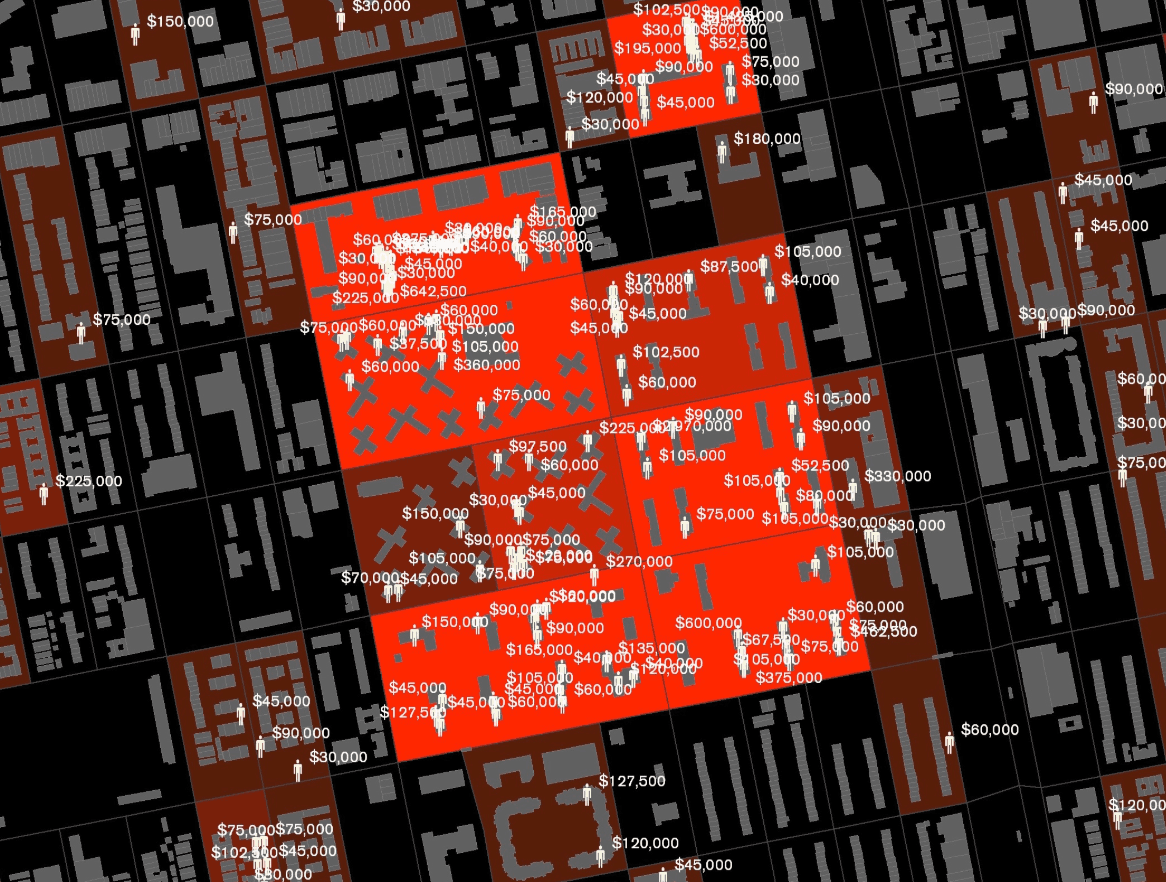

The Money Is Already There. It’s Just Downstream.

In 2006, researchers at Columbia University did something simple and devastating. They mapped where New York State was spending its prison budget. Not by county, not by borough. By block. Other research groups followed suit, doing similar calculations for their own cities - Philadelphia, LA…

What they found: the spending was absurdly concentrated. Eleven city blocks in Brooklyn cost taxpayers $11.8 million per year in incarceration alone. In Philadelphia, taxpayers spent $290 million to lock up residents from 11 neighborhoods, while the school district across the same zip codes faced a $147 million budget shortfall. In Los Angeles, 67% of low-performing schools sat inside the highest-incarceration neighborhoods; 68% of high-performing schools sat in the lowest.

They called it the “Million Dollar Blocks” project. And the point was not that prison is expensive, everyone knows that. The point that they were trying to make was that the money needed to make a difference at the root of the issue - it already exists. We are already spending it. We’re just spending it at the end of the pipeline, after the failure, after the harm. What if you redirected it upstream? Into schools on those same blocks. Into job programs. Into housing. Into the things that, according to every somewhat serious study of crime causation, actually change whether someone ends up in a cell or a classroom.

This is what I mean by the difference between short-term and medium-term thinking. Cameras catch criminals, but they don’t make fewer criminals. They (debatably) improve the short-term situation, while worsening the long term one. Similarly, drones respond to threats. They don’t change the conditions that produce them. And if you never look up at them, you end up with a very expensive, very well-surveilled pipeline from poverty to prison, and a TED talk about how well the pipeline works.

What Actually Works (And Doesn’t Fit on a Slide)

Now, I’m not arguing for a world without security technologies. That wouldn’t be a serious position - and it would be very naive. But I am arguing that the ratio is off. Way off. And that there are people doing the harder, less photogenic version of “making communities safer” who deserve three times the airtime and a tenth of the skepticism.

A few examples:

- READI Chicago, run out of the University of Chicago Crime Lab, put high-risk men through cognitive behavioral therapy and transitional employment. The result: a 79% reduction in arrests for shootings and homicides among participants referred by outreach workers, and a 43% drop in victimization. Not extra surveillance. Just a conversation and a paycheck.

- The Cure Violence model treats gun violence the way epidemiologists treat a disease, through community-level intervention, violence interrupters, and behavioral change. It has shown statistically significant reductions in shootings across multiple cities.

These programs are harder to scale than a drone grid. They’re less visually dramatic. But they address the input, not just the output. They make fewer criminals instead of catching more of them. And that’s a fundamentally different kind of safety, one that doesn’t swing in one direction or another, depending on who moves in the White House every four years.

Bry and Langley are good at what they do. Their tech does real things. But presenting automated watching as a solution to the fact that some communities are broken by poverty and neglect is, at best, a category error. At worst, it’s the kind of thinking that builds very good tools and then finds out, two administrations later, who they were used for.

When you’re building drones, the sky isn’t the limit. But it raises another question: what you’re looking for once you’re up there. And whether you ever thought to look down.